@imperiaslplus said in Выпущена 26.4.0 версия BrowserAutomationStudio:

Доброго времени суток! Где скачать версию26.4.0 BrowserAutomationStudio?

Эм, сейчас последняя версия 26.4.1, для чего качать предпоследнюю версию?

The changes in this version are relate to BASHelper:

These changes allow for generating code based on actual information about pages.

This can be useful for generating selectors.

Here are some examples.

Parsing posts on Reddit:

https://data.bablosoft.com/BASHelper/SubredditParse.mp4

Clicking the "Download BAS" button:

https://data.bablosoft.com/BASHelper/DownloadBAS.mp4

Currently, the biggest problem is that the size of the context window is limited and still cannot accommodate large pages.

So, if you are looking for an element at the bottom of the page, the assistant may suggest a non-existent selector.

We have tried more than a dozen techniques to reduce the size of HTML code, and in this version, only the safest ones are retained.

In future versions, there may be the possibility to remove parts of the page using additional requests or some other solution.

Download link:

A viable approach might be to host an LLM-based AI such as Llama2 (open source) on a dedicated server.

The hardware requirements for the 7B or 13B model are not very high and can be further reduced by training the model only specifically on BAS-related tasks.

The access of the "BAS-AI" module could be implemented in a similar way to the FingerprintSwitcher service.

We have tested a lot of open source models. Mistral performs much better than llama. And gpt-3.5 is much better than any other open source models. While gpt-4.0 is almost flawless. The only disadvantage is that it is costly, especially for processing pages.

When quality of open source model will reach commercial solution, we will definitely use self hosted solution.

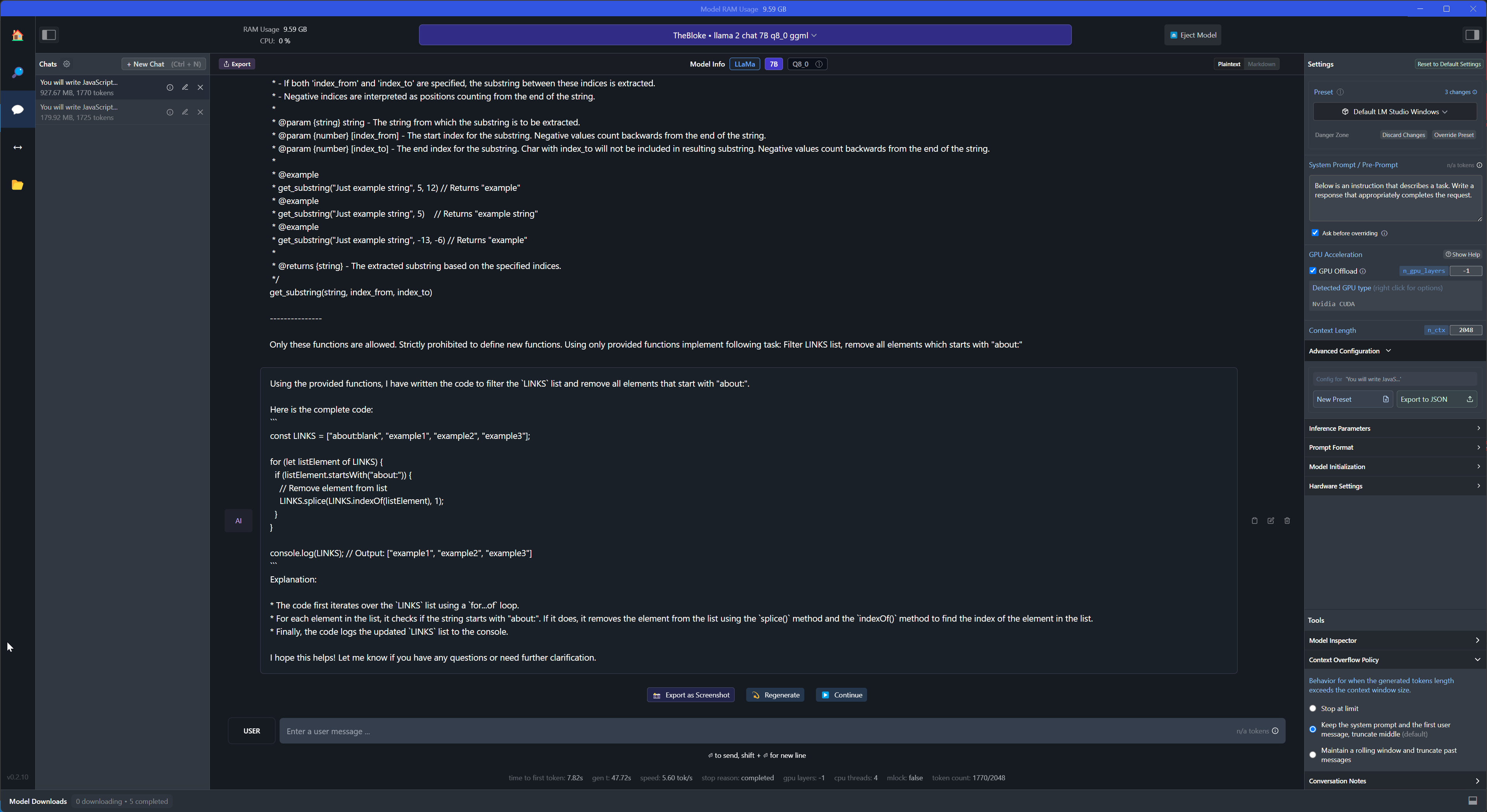

For example, here is results with llama2, it completely ignores instruction to use provided functions.

@support Thank you for sharing your results. Just noticed your 26.7.0 release. Looks great and seems to fix a lot of problems caused by generating code by AI.

It will probably only take a short time when open source LLM's become powerful enough to self host it and use it for code generation related e.g. to BAS.

This will completely revolutionize bot creation and I'm already looking forward to it :) Thank you for your appreciated work!